What Is Sales Enablement? The Complete Guide for Sales Leaders Who Need More Than a Definition

Sales enablement is everywhere. Actual results aren't. Here's what it means, why most programs fail, and how to build one that closes the Execution Gap.

Posted by

Secondbody.ai

Published by

Last Updated

Here's something worth admitting before we start. SecondBody sells AI sales training software. Our platform — Rory, our AI coach — is the thing reps practice with and the thing managers use to coach at scale. Which means we have a commercial stake in how you think about sales enablement, because the way you define enablement determines how you spend your budget.

Read everything below with that in mind. We're going to earn your trust the same way we always do: by being harder on our own category than anyone else in it. Most articles about sales enablement are written by enablement platforms selling their own definition. They write "enablement" and mean "content management" or "training technology" or "Salesforce integration." The word covers so much that it covers nothing precisely.

We're going to give you the definition that actually matters. Then we're going to tell you exactly why most enablement programs fail — including, where it's honest to say so, programs that use platforms like ours. Then we're going to give you a framework for thinking about what enablement is supposed to do and how you'll know if it's working. By the end, you'll have a sharper answer to the question in the title than most practitioners who've been running enablement programs for years.

What does sales enablement actually mean?

The standard industry definition is roughly: sales enablement is the practice of providing sales teams with the resources, content, and tools they need to engage buyers effectively. Most professionals in the space would accept that.

The problem is that "providing resources, content, and tools" describes an activity. It does not describe an outcome. And when organizations build their enablement programs around activity metrics — courses created, content pieces published, certifications completed, tools deployed — they end up with programs that are technically active and practically inert.

The LMS has 400 modules. Nobody watches them.

The battle cards are comprehensive. Nobody uses them on a live call.

The onboarding program is detailed and well-designed. New reps are still slow to quota.

Here's the definition that actually matters:

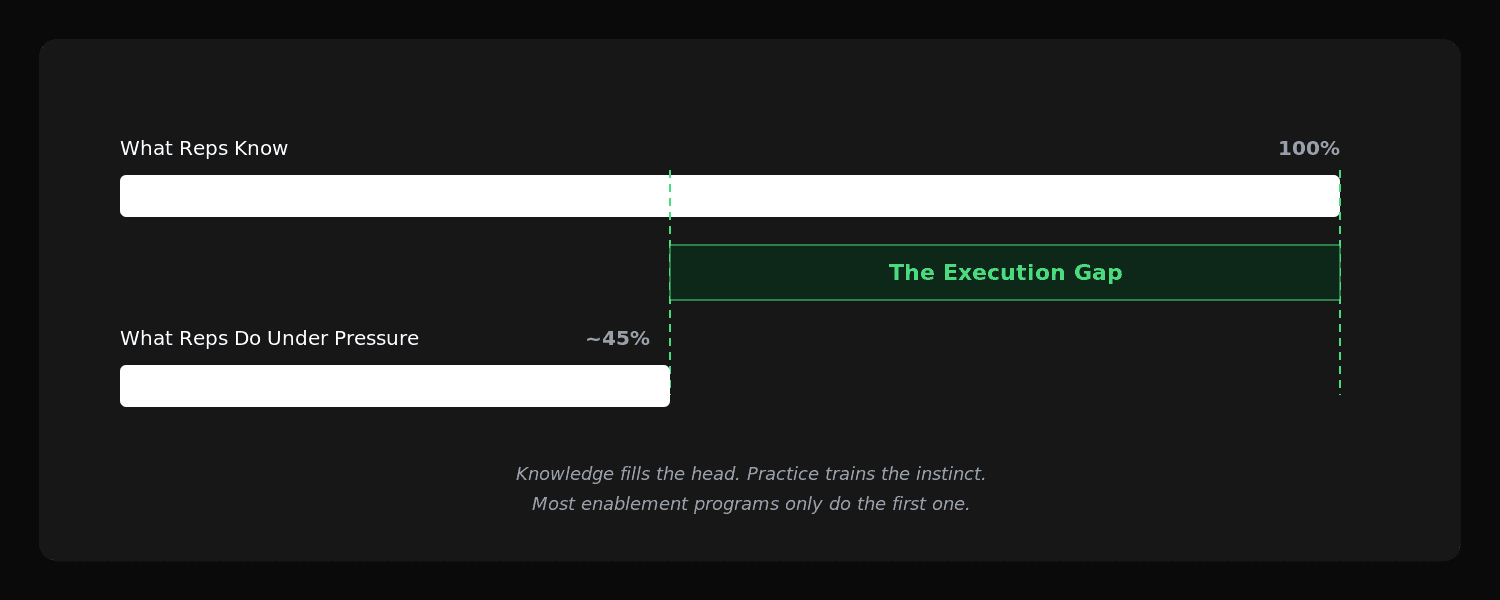

Sales enablement is the infrastructure that closes the gap between what reps know and what they can execute under pressure.

That word — execute — changes the entire orientation of the function. It shifts the focus from knowledge to capability. From access to readiness. From what reps have been given, to what they can actually do when a buyer says something unexpected at a moment that matters.

Here's what that looks like in practice.

A rep has been through the onboarding program. They completed every module in the LMS. They passed the certification. Their manager signed off on readiness. They get on their third week of real calls and a prospect says: "We were going with you, but your competitor just cut their price by 40%. You have one minute." The rep freezes. They deliver a half-formed response that sounds like they're reading from a note card. The deal dies.

That rep had knowledge. They had content. They had certification. What they did not have was the ability to execute under pressure. The gap between those two things — the gap between knowing and doing — is what good enablement exists to close.

We call that gap the Execution Gap. It shows up in every sales org, at every scale, in every industry. It's the reason enablement programs that look impressive on paper produce modest results in the field. The program filled the rep's head. It did not train their instincts. Those are different problems requiring different solutions.

For more on the category's history and what technically falls within its scope, there's a detailed overview in our sales enablement glossary entry.

What does the Execution Gap look like in practice?

The Execution Gap is not a single failure mode. It shows up at different stages of the selling motion and for different reasons.

The certification pass-fail illusion

A rep completes a training module on objection handling. They pass the quiz at the end. The system marks them certified. The manager checks the box. Six weeks later the rep is losing deals on the same objection the module covered.

What happened? The rep learned enough to answer multiple-choice questions about the objection. They did not practice handling the objection live, under pressure, with a real buyer who is skeptical and not going to wait for the rep to recall the right framework. The quiz measured recall. The real call measures instinct. Those are different skills, and a certification program trains one and ignores the other.

This is not a failure of the module or the content. It is a structural gap in how most enablement programs are designed. They're built for knowledge transfer, which is easy to measure and cheap to scale. They are not built for skill development, which requires repetition, feedback, and progressively harder practice conditions.

The quarterly-event hangover

Most orgs still run an annual sales kickoff and quarterly training days. These are real investments — venue, travel, speaker fees, and the cost of pulling a hundred people out of selling mode for a week. The content is often good. The energy in the room is real.

Six weeks later, the adoption rate on what was covered in that room is around 20-30%. Research on learning retention suggests people forget roughly 70% of new information within 24 hours and 90% within a week without active practice. (The skeptical note: those numbers are frequently cited by learning technology vendors. But even if you cut them in half, the underlying point holds. Single-event training doesn't stick.)

The quarterly event model is not broken because the events are bad. It's broken because it treats skills as something that can be downloaded in a day and applied indefinitely. Skills require repetition. Repetition requires frequency. You cannot get better at handling pricing objections by practicing it twice a year.

The content library that nobody uses

Most mature enablement programs have a content library. Battle cards. Competitive positioning documents. Messaging guides. Email templates. Objection response frameworks. Many of these were built by capable people who put real effort in.

Adoption rates are typically low. Not because the content is bad, but because reps cannot easily access it at the moment they need it, don't have the instinct to reach for it under pressure, and were never trained to use it in live simulated scenarios. The content assumes the rep will consult the battle card mid-call. Reps don't consult battle cards mid-call. They rely on what's already wired into their instincts.

Building the battle card is not enablement. Training the rep to handle the scenario the battle card covers — under pressure, repeatedly, until the response is automatic — is enablement.

For more on why content-as-training breaks down, we've covered this in The Enablement Trap: Confidence Is Not a KPI.

Why do most sales enablement programs fail?

There are patterns. We've seen them clearly enough across organizations of different sizes to describe them precisely.

They confuse inputs for outcomes

Inputs: training hours, modules completed, content pieces published, certifications issued, tools deployed.

Outcomes: reps executing better on real calls. Pipeline moving faster. Win rates improving. Ramp time shortening. Retention going up.

Most enablement programs have a clear plan for inputs and a vague plan for outcomes. The quarterly report shows the number of sessions completed and the adoption rate of the new playbook. It does not show whether any rep is measurably more effective at discovery conversations than they were three months ago. That measurement is harder, and most programs haven't built it.

The result is organizations that spend hundreds of thousands of dollars on enablement each year without being able to demonstrate a skill effect. When the CFO asks whether the enablement budget is working, the answer is "we trained 180 people" rather than "we moved the team's average discovery score from 5.1 to 6.8 over the quarter." That's not the CFO's failure. That's an enablement program that has not connected its inputs to its stated outcomes.

They're built for the org chart, not the skills gap

Most enablement programs are organized around roles and categories: "SDR training," "AE certification," "manager enablement," "product update training." Each has a curriculum, a schedule, and a sign-off process.

The problem is that skill gaps don't map neatly onto org chart categories. The rep who needs help with discovery questions is not in the "needs discovery training" bucket — they're in the "finished discovery training and checked the box" bucket. The actual gap only becomes visible when you watch reps do the thing, not when you confirm they've been certified on it.

Good enablement starts with the skills gap, not the org chart. Which reps can't handle this specific moment under this specific condition? What does it take to close that gap? How will we know when it's closed? Those questions require skill-level visibility that most programs haven't built.

They separate enablement from the daily rhythm of selling

The most common structural failure: enablement happens at separate times from selling. Training is a thing you do on Tuesday afternoons. Selling is a thing you do the rest of the week. The two streams don't connect.

The programs that work are woven into the daily rhythm. A rep practices for 10 minutes before their first call. The practice surfaces a gap. The manager sees it in the Monday briefing. The coaching happens in the Tuesday async loop. By Thursday, the rep has drilled the gap and is applying the fix on real calls. The loop closes inside one week.

That's not what most programs are designed to do. Most programs are designed to run training events and publish content. Daily rhythm integration is an afterthought, if it's considered at all.

They don't measure execution under pressure

The diagnostic question: does your enablement program include a mechanism for watching reps perform a specific skill in a simulated high-stakes moment?

Not: watching them answer questions about the skill. Not: reviewing recorded calls where the skill came up and they failed it. Watching them perform the skill under simulated pressure before it matters on a real deal.

Most programs don't have this. The closest thing is live roleplay in training sessions, which creates its own problem — reps perform differently in front of their manager and peers than they do in a controlled private practice environment, and the sample size is too small to track improvement over time. What's needed is a controlled, repeatable practice mechanism. AI roleplay exists as a category specifically because the human-roleplay alternative doesn't scale to weekly frequency. We covered this in more depth in our guide to what AI sales training actually does.

How does sales enablement actually work?

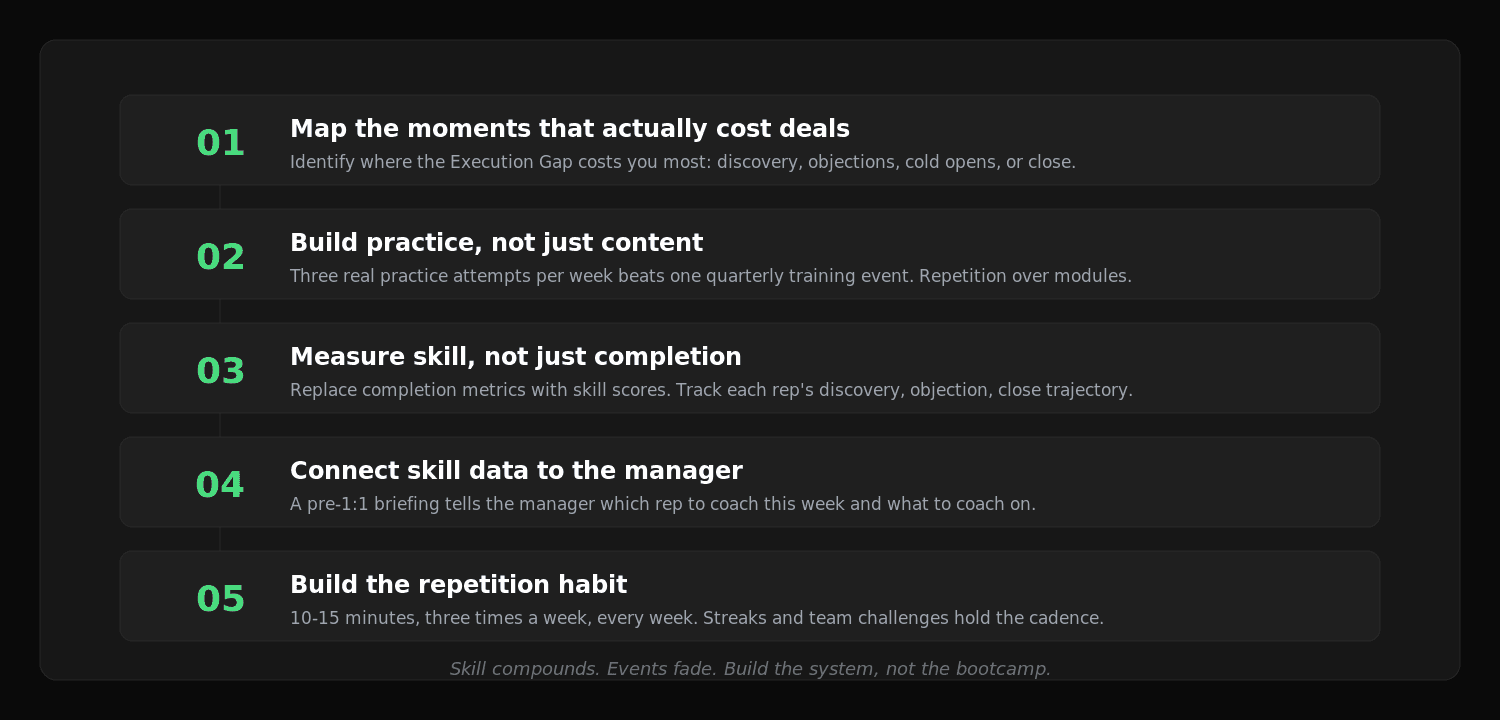

The mechanism for closing the Execution Gap is not complicated. It has five steps.

Step 1: Map the moments that actually cost deals.

Before building anything, identify the moments in your specific selling motion where the Execution Gap most commonly causes deals to stall or die. Is it at discovery, when reps fail to surface the real budget or the real success metric? Is it at objection handling, when reps fold on price pressure? Is it at cold opens, when reps can't hold 30 seconds of attention? Is it at close, when reps can't navigate procurement without discounting?

Most orgs have a rough sense of this. The better orgs have call data, win/loss analysis, and manager-level rep assessments that make it precise. Build your enablement program around the moments that matter most for your selling motion, not around a generic curriculum someone wrote three years ago for a different market.

Step 2: Build practice, not just content.

For each skill that matters, design a practice mechanism — not just a module. The module explains what to do. The practice mechanism makes the rep do it, repeatedly, under simulated pressure, until the behavior is automatic.

The mechanism can be human-led (manager roleplay, peer roleplay, live coaching sessions) or AI-led (platforms like SecondBody where reps practice voice-first with an AI buyer that pushes back and changes direction). The mechanism matters less than the repetition. Three genuine practice attempts per week on a specific scenario builds skill faster than one quarterly training event covering the same topic.

Step 3: Measure skill, not just completion.

This is the shift that separates programs that produce outcomes from programs that produce reports. Replace completion metrics with skill metrics. Track each rep's performance on the scenarios they're running. What's their discovery score this week compared to six weeks ago? Where are they freezing? Where are they improving? Which reps have closed their gap and which ones haven't?

This level of measurement requires either very hands-on manager observation, which doesn't scale above six or seven reps, or tooling that tracks and scores rep performance automatically. The tooling choice depends on team size and budget. The measurement discipline is non-negotiable regardless of how you implement it.

Step 4: Connect skill data to the manager's coaching workflow.

The measurement is only valuable if it drives action. The skill data should surface directly to the manager in a format that tells them which rep to coach this week and what to coach on. Not a dashboard the manager has to interpret. A briefing that says: "Your team's objection-handling average is 5.8. The three reps furthest from target are X, Y, and Z. Here's what they're each stuck on."

This is the difference between visibility and information overload. Visibility means the right signal goes to the right person in a usable form. Most enablement programs produce data without solving the last-mile problem of getting that data into the manager's coaching decision at the start of the week.

Step 5: Build the repetition habit, not the training event.

Skill development is not a sprint. It's a compounding habit. The program that produces sustained improvement is the one that gets reps practicing for 10-15 minutes three times a week, every week, not the one that runs intensive bootcamps twice a year.

The habit mechanics matter. Streaks, team challenges, weekly quests — not because gamification is magic but because habits need external structure, and the alternative (pure self-discipline in a competitive selling environment) fails for most reps most of the time. The teams that build consistent practice habits are the ones whose skill distributions shift meaningfully over a year. The teams that run occasional intensive training are the ones with the same distribution they had 18 months ago.

Who is sales enablement for?

Everyone in a sales org has a stake in enablement. But the design and delivery look different for different segments.

SDRs and BDRs

The highest-leverage enablement segment and historically the most neglected. SDRs have the highest churn, the steepest learning curve, and the most homogenous skill challenge: cold opens, early objections, booking qualified meetings. They also have the most available practice time, because a day that doesn't generate connects is a day with real unstructured time that can be redirected.

An SDR enablement program that runs daily voice practice against realistic buyer personas and gives each rep a scored transcript from their last five attempts is more valuable than any certification curriculum. The gap is cold opens and early objection handling. The fix is repetition at volume. The tools exist. Most orgs haven't connected them to the SDR onboarding motion.

Account Executives

The skill challenges shift at the AE level. Discovery depth, demo storytelling, multithread navigation, competitive differentiation, pricing negotiation, close execution. The practice scenarios are harder to design and require more buyer persona sophistication.

AEs also tend to have more resistance to training, which makes skill development conversations more delicate. The manager who shows an AE that their discovery score is trending down in the data is more likely to get buy-in than the manager who tells the AE "you need to work on your discovery." Skill data creates a different kind of coaching conversation — one the rep is less likely to dismiss.

Sales managers

Managers are the multiplier in any enablement program. A manager with eight reps who coaches effectively produces eight improving reps. The same manager who coaches ineffectively keeps eight reps flat. The ROI on enabling managers well is enormous, and most orgs underinvest in it dramatically.

Manager enablement is about two things: giving them the skill-level visibility to coach specifically, and giving them a coaching workflow that fits inside a real work week. A manager who has to manually listen to eight call recordings per week to generate coaching inputs will sustain that for two weeks and then stop. A manager who gets a Monday morning briefing that says "here are the three reps to focus on and here's what each of them is stuck on" will sustain it for a year.

That manager workflow is what SecondBody was specifically built around. The briefing Rory produces from the prior week's practice data is the thing most managers tell us shifted their coaching from reactive to systematic. For more on this, see our coaching at scale page.

Enablement teams

Practitioners who design and run enablement programs often face a structural tension: they own the input metrics (training hours, content published, certifications completed) but the output metrics (rep skill improvement, win rate, ramp time) are owned by sales leadership. This creates programs that focus on the thing they're measured on rather than the thing that matters.

Enablement practitioners who want to change this need a way to measure skill outcomes directly. That means building measurement mechanisms into every program they design — not as an afterthought, but as the primary constraint. The enablement teams page covers how SecondBody fits into an enablement team's workflow specifically.

New hires

The highest-urgency use case and the one with the clearest ROI. Every week a new rep is not at full productivity is a week of revenue the org is leaving. For an org hiring 20 reps per year at $150k OTE, shaving eight weeks off average ramp time produces several million dollars in earlier-quota-attainment value annually. The organizations that run structured daily practice from day one, track new hire skill scores weekly, and route that data to the hiring manager's coaching workflow will compress the ramp faster than any other approach.

What are the benefits of effective sales enablement?

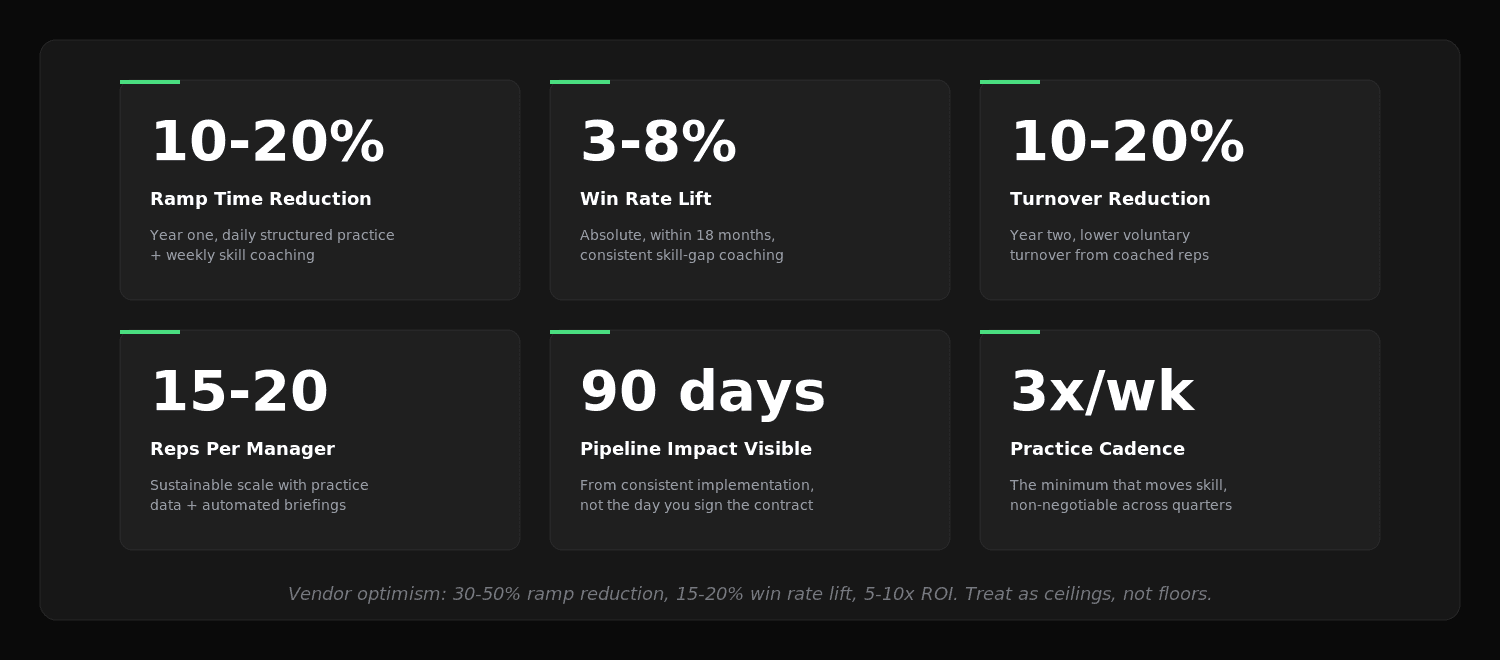

The optimistic case and the skeptical case, as always.

Faster ramp time

The optimistic case (vendor-sourced): organizations with structured enablement reduce rep ramp time by 30-50%.

The skeptical case: 15-25% ramp reduction is realistic for teams that run daily structured practice, track skill metrics weekly, and have a functioning manager coaching cadence. The 50% claims come from cherry-picked cohorts in ideal conditions. A 15% reduction on a six-month ramp is still three and a half weeks of earlier productivity per rep. For a team hiring 20 reps per year, that math becomes significant quickly.

Higher win rates

The optimistic case: well-enabled teams see win rate improvements of 15-20% within 12 months.

The skeptical case: win rate is a noisy metric with many inputs — lead quality, market conditions, competitive dynamics, comp plan changes, product-market fit, and rep execution. Enablement affects one piece of that equation. We'd expect a 5-8% absolute win rate lift in the first 18 months from a program that genuinely builds rep skill. For a team running $20M in annual pipeline, moving from 22% to 25% close rate is worth $600k in additional closed revenue. That's a significant number on a reasonable enablement spend.

Lower rep turnover

The optimistic case: reps who feel developed and effective stay 2-3x longer.

The skeptical case: the causal chain is real but the timeline is slow. Reps leave for two primary reasons: they're not hitting quota (often a skill gap problem) and they don't feel invested in by their organization (often a coaching gap problem). Both are enablement problems. Solving them reduces turnover. The honest timeline is 12-18 months before the effect is clearly measurable. Anyone claiming retention improvements in month three is extrapolating well beyond the data.

Manager leverage

The optimistic case: managers with good enablement infrastructure can coach 20-30 reps effectively instead of the typical 6-8.

The skeptical case: this depends entirely on the quality and format of the signal the manager has. A manager with good practice data, automated briefings, and async coaching workflows can genuinely scale to 15-20 reps without a meaningful quality drop. Above 25, quality starts to degrade regardless of tooling. The leverage is real. The ceiling is also real. Plan for both.

More accurate forecasting

The benefit nobody puts in their enablement pitch deck. When managers have skill-level visibility into their reps, forecast accuracy improves. Because they can separate "this deal is stuck because the rep is folding on the procurement objection — that's a skill gap I can close" from "this deal is stuck because the buyer's budget cycle shifted." Different causes, different responses, different forecast confidence. The accuracy lift is observable in the second quarter after the skill measurement layer is in place.

For more on measuring these outcomes specifically, we wrote about the five key metrics for measuring sales training ROI.

How is sales enablement different from sales training?

This confusion is consistent enough across buyers that it's worth being precise.

Sales training is a subset of sales enablement. Training is an event or a program — a new hire cohort goes through discovery training in week two of onboarding, or the team does a competitive positioning refresher in Q2. Training has a start date and an end date.

Sales enablement is the ongoing infrastructure. It includes training, but it also includes the content the rep accesses in the flow of work, the coaching mechanism the manager uses weekly, the practice cadence that keeps skills sharp outside of training events, and the measurement system that tells the team which gaps are closing and which aren't. Enablement is always running. Training runs when you schedule it.

The failure mode of treating training as enablement: the organization runs good training events and assumes the enablement job is handled. It isn't. The training event plants the seed. The enablement infrastructure waters it. Without the ongoing rhythm of practice, coaching, and measurement, training's effects decay within weeks.

Dimension | Sales Training | Sales Enablement |

|---|---|---|

Duration | Event-based (days, weeks) | Ongoing infrastructure |

Delivery | Scheduled programs | Woven into weekly rhythm |

Measurement | Completion, certification | Skill improvement over time |

Ownership | L&D or enablement team | Enablement and sales management together |

Effect timeline | Immediate, short-lived | Compounding over quarters |

The organizations with the best field performance treat training as one input into a broader enablement system, not as the system itself. The LMS is a component. The AI practice platform is a component. The manager coaching workflow is a component. None of them alone is the answer. The answer is the system they form together.

For more on why LMS alone doesn't solve the execution problem, see why LMS-based training breaks down in field sales.

How is sales enablement different from conversation intelligence?

Another common conflation. Worth separating cleanly because teams frequently buy one expecting the benefits of the other.

Conversation intelligence — Gong, Chorus, Clari Copilot — listens to real customer calls after they've happened. It transcribes, scores, and surfaces patterns. It tells managers and reps what happened on the call.

Sales enablement is a broader job. Conversation intelligence is one diagnostic input into enablement. It's excellent for identifying patterns — which objections come up most, where in the call deals tend to stall, what language top performers use that middle performers don't. But it doesn't give reps a way to practice the fix. A rep can listen to their call recording and understand precisely what went wrong. That understanding does not make them better at the next call. Getting better requires practice.

The Execution Gap conversation intelligence doesn't close: the rep needs to run the hard scenario again, under pressure, with feedback, and with a different approach. Recording review shows the problem. Practice closes it. Good enablement infrastructure includes both layers — the diagnostic tool that surfaces what the gap is, and the practice mechanism that actually closes it.

We wrote more on this distinction in our conversation intelligence glossary entry.

What ROI can you expect from sales enablement?

Two numbers to keep in mind.

The first is the industry benchmark. Consulting firms and technology vendors frequently cite studies showing 10-20% win rate improvements, 30-50% ramp time reductions, and 5-10x ROI on enablement spend. These numbers reflect the best implementations in the best conditions. Treat them as ceiling estimates.

The second is the realistic floor for a well-run program. Based on what we see in practice:

Ramp time reduction: 10-20% in year one from a program that runs structured daily practice and weekly skill coaching. 20-35% by year two once the practice habit is established across the team.

Win rate lift: 3-8% absolute in programs where managers are coaching to specific skill gaps and tracking improvement over time. Within 18 months of consistent implementation.

Turnover reduction: 10-20% lower voluntary turnover in year two, concentrated in the reps who received consistent, specific coaching throughout the year.

The consistent pattern in programs that underperform: they deployed tools and content without the practice cadence, the skill measurement, or the manager coaching loop. A practice platform with 20% adoption produces 20% of the benefit. The tool alone doesn't produce the outcome. The system does.

What does a practical enablement cadence look like week to week?

Here's the weekly rhythm for a team that has gotten this right.

Monday: The sales manager reviews the prior week's practice data. The briefing — automated or manually compiled — tells them which reps had the biggest skill gaps and what they were stuck on. Five minutes. The manager has the week's coaching priorities before the first pipeline meeting.

Monday/Tuesday: Reps run their first two practice sessions of the week. Fifteen minutes each, voice-first against a scenario tied to the week's focus skill — cold open, pricing objection, discovery depth, whatever the team is working on. The AI scores the attempt. The rep sees their score and one specific observation.

Tuesday/Wednesday: The manager works through the priority list with async feedback. Ninety seconds per rep, focused on one specific thing. "You handled the interruption well but then over-explained the feature. Try attempt three and see if you can cut your follow-up response in half." Five reps, eight minutes of manager time. The reps respond async — some apply the fix that day, some take a day. The manager checks back when they have a moment.

Wednesday/Thursday: 1:1s. Pipeline and forecast take fifteen minutes. The remaining time goes to skill: "I saw your discovery scores dipped this week — what's going on with the buyer who pushed back on your timeline?" The 1:1 has real content. It's not just a forecast call.

Thursday: Third practice session of the week. Reps apply whatever the manager flagged on Tuesday. The manager checks the updated attempt score at end of day.

Friday: The manager closes out coaching notes. Reviews who improved, who's still stuck. Queues next week's scenarios. Fifteen minutes, then done.

This is a tractable week. It doesn't require ten extra hours of manager time. It doesn't ask reps to sit through an hour of training on top of a full day of calls. It's woven in. That's what sustainable enablement infrastructure looks like in practice — not a separate thing, but the operating system underneath the selling motion.

What are the most common enablement mistakes?

Six patterns we see consistently.

1. Deploying a tool and calling it a program. The LMS is live. The call intelligence platform is connected. The AI practice platform is provisioned. Six months later, adoption is 20% and no one is sure why. The tool is infrastructure, not the program. The program is the habit, the cadence, the measurement, and the coaching loop. Tools without a program produce adoption charts that look bad at the QBR.

2. Measuring completion and calling it success. "87% of the team completed the objection handling module" is not evidence that the team got better at objection handling. It's evidence that they watched a video. Track the number that actually matters: did their objection-handling scores improve on real practice sessions after the module versus before? That number tells you whether the enablement did its job.

3. Making practice optional. If practice is available but not required, reps will skip it when the quarter gets tight, the pipeline looks bad, or the calendar fills. Those are exactly the moments when practice matters most. The teams with the best skill improvement are the ones where a minimum practice cadence is non-negotiable — three attempts per week, every week, regardless of quarter pressure.

4. Coaching the leaderboard and ignoring the middle. Top reps get coaching attention because their deals are big and the manager wants to stay close to the quarter. Bottom reps get attention because they're in trouble and someone has noticed. The middle fifty percent — often the largest untapped performance pool in the org — gets neglected. Skill gap, not deal size, should drive coaching allocation.

5. Separating enablement from sales management. Enablement programs that run in parallel to sales management instead of through it produce half the result. The manager is the last-mile delivery mechanism for the skill coaching that actually closes the Execution Gap. An enablement program that generates great practice data but doesn't route it to the manager in an actionable format is doing half its job at best.

6. Skipping the methodology alignment step. An enablement program built around SPIN and a sales org that runs MEDDIC produces friction instead of progress. Before designing practice scenarios, confirm which methodology the sales motion actually runs — not what's in the handbook, what managers actually coach to on real calls. Scenarios that don't match the real selling motion produce scores that don't predict field performance.

For more on the failure patterns, we've written about why sales training fails under real pressure.

How to build a sales enablement program: a step-by-step guide

If you're starting from scratch or rebuilding after a program that didn't work, here's the sequence that gets results.

Week 1-2: Audit the current state honestly. Document what actually exists. How many minutes per week does each rep actually get in real, skill-focused coaching? What does a typical 1:1 cover — pipeline or skill? What is the rep practice cadence today (probably close to zero, but document it)? What does the manager actually know about each rep's specific skill gaps, beyond gut feel? Most orgs discover their existing enablement infrastructure is considerably thinner than the spending and the narrative would suggest.

Week 2-4: Map the Execution Gap precisely. Identify the two or three moments in the selling motion where the gap most commonly costs deals. Use call recordings, win/loss data, rep interviews, and manager input. Be specific: "reps freeze when buyers push back on price in the final close stage" is useful. "Reps need to get better at selling" is not. The gap you identify here defines everything else you build — scenarios, metrics, coaching focus, measurement.

Week 3-6: Build the practice mechanism. For each gap you identified, design a practice scenario. Decide whether you're running human-led roleplay, AI practice, or a combination. Set the minimum practice cadence: three attempts per week per rep on the focus skill. Don't try to cover every skill at once. One skill, done well and measured carefully, for one quarter, will outperform five skills done superficially across the same period.

Week 5-8: Implement the measurement layer. Define the skill metrics you'll track. If you're using an AI practice platform, scoring is automated. If you're running human-led roleplay, build a consistent rubric the manager uses every time. Establish baseline scores in week one of the quarter. Track weekly. Report to sales leadership monthly. This is the step most orgs skip, and it's the step that makes everything else defensible.

Week 8-12: Connect the data to the manager's coaching workflow. Build or configure the weekly briefing. The manager should arrive at each Monday knowing which reps to focus on and what to coach specifically. The handoff from practice data to the manager's coaching decision is the most important integration in the system — more important than the practice platform choice, more important than the scenario design. Get this right before adding any other program components.

Quarter 2+: Iterate, expand, and maintain. After the first quarter, rotate to a new skill focus based on which gaps have closed and which remain open. Review which reps improved and which didn't, and understand why before deciding what to change. Add peer learning loops as a complement to manager-led coaching. Expand to a second team segment if the first is running smoothly. Treat the program as a product requiring maintenance, not a one-time deployment that runs on its own once launched.

The organizations that get real ROI from enablement are the ones that run this sequence, resist the urge to deploy everything simultaneously in week one, and measure skill outcomes from the beginning. The organizations that see poor ROI typically ran the deployment backwards — tools first, measurement never, practice optional.

Frequently Asked Questions

What is the simplest one-sentence definition of sales enablement?

Sales enablement is the infrastructure that closes the gap between what reps know and what they can execute under pressure. Everything else — the tools, the content, the training events — is a subset of that.

Is sales enablement a department or a function?

Both, depending on the organization. In larger orgs there's typically a dedicated enablement team that owns the content, tools, and programs. In smaller orgs, enablement is usually a shared function between sales leadership and whoever runs training. The function exists regardless of whether there's a formal team. What matters is that someone owns the skill outcomes, not just the activity inputs.

What's the difference between sales enablement and sales operations?

Sales operations owns the systems that track and analyze sales activity — CRM, reporting, compensation design, territory planning. Enablement owns the capability side: can reps execute the specific skills the org's sales motion requires? The two functions overlap on tooling decisions and data access but have different mandates. Operations tells you what's happening with deal flow and activity. Enablement determines whether the people running those activities are improving at the skills that drive outcomes.

How big does a sales team need to be to justify a dedicated enablement hire?

At roughly 20-30 reps, the ROI of a dedicated enablement hire starts to be clear. Below that, sales leadership typically handles enablement as part of the management job. Above 50 reps, the absence of structured enablement is usually a measurable drag on team performance. Above 100 reps, a formal enablement function is standard.

Does sales enablement work differently for inside sales versus field sales?

The principles are the same. The delivery differs. Inside sales teams have more available practice time (reps are at a desk with gaps in their day) and shorter feedback loops. Field sales teams have longer deal cycles and more buyer variability per scenario, which makes practice design more complex. Both segments benefit from structured practice and manager-side skill visibility. We cover the field sales motion specifically.

What does a good enablement tech stack look like in 2026?

For most orgs, three layers: a CRM for activity data, a conversation intelligence tool for call diagnostics, and an AI practice platform for rep skill development. Most orgs have the first and second. The third is the layer most frequently missing — and it's the one that actually closes the Execution Gap, because the other two diagnose problems without giving reps a place to practice the fix.

What's the biggest single change an enablement leader can make this quarter?

Add a minimum practice cadence. Pick the one skill gap that costs the most deals. Design two practice scenarios around it. Require three attempts per week per rep. Track scores and surface them to the manager weekly. Review patterns in week four and adjust. The orgs that add a weekly practice cadence to an existing enablement program consistently see more skill movement than those that add more content to an existing library.

How does SecondBody fit into a sales enablement program?

SecondBody is the practice and coaching layer. Rory runs voice-first practice scenarios for reps — cold calls, discovery, objection handling, closing moments — and scores each attempt against the methodology the org uses (SPIN, MEDDIC, Sandler, or a custom rubric). Managers get a pre-1:1 briefing that surfaces which reps to coach this week and what to coach on. One manager can carry 20-30 reps that way without the coaching quality degrading.

SecondBody doesn't replace a conversation intelligence tool, an LMS, or a playbook. It fills the gap those tools don't fill: structured, scored, voice-first practice that a rep can run daily and a manager can review in five minutes per rep.

What does SecondBody cost?

Public pricing is on the website. Pro tier is $30/user/month with unlimited seats — practice isn't rationed by user count. There's a starter tier you can begin with today, and a demo path where you run real practice sessions and see what the manager briefing looks like before committing. Customers include Cyera and others operating under NDA.

Is SecondBody compliant with enterprise security requirements?

SOC 2 compliant. Current integrations include Aircall, Cloudtalk, Momentum.io, Fathom, and TeamTailor. Salesforce and HubSpot integrations are shipping in 2026. Details on our trust center.

How long does it take to see results from a new enablement program?

Practice engagement and early skill score movement: visible by week four to six. Manager coaching quality, with skill data driving the agenda: measurable by week eight if baseline data was captured on day one. Pipeline impact: 90 days from consistent implementation. Win rate effects, if they're coming, take 12-18 months. Anyone promising clean win-rate lift in month one is selling something that the data doesn't support. The ROI from good enablement compounds. Plan for the year.

What's the most common misconception about sales enablement?

That it's a content problem. Most organizations respond to "our reps aren't executing well" by creating more content — more battle cards, more playbooks, more modules. The content isn't the gap. The practice is. Reps who can't execute under pressure don't need more information to read. They need more repetitions of the hard skill before the pressure is real.

Close the Execution Gap.

Here's what the research, the practitioner experience, and the implementations we've watched all point to: the organizations winning on sales performance in 2026 are not the ones with the most sophisticated content libraries. They're not the ones with the most tools in their stack or the most certifications on record. They're the ones where reps practice the hard moments before those moments happen on a real deal. Where managers arrive at each week knowing exactly which rep to coach and what to work on. Where the Execution Gap — the distance between knowing what to do and being able to do it under real buyer pressure — is being actively closed, rep by rep, skill by skill, week by week.

That's what sales enablement is supposed to do. Most programs are not doing it. They're providing resources, content, and tools. They're measuring completion and coverage. They're checking boxes. The Execution Gap stays open. The deals keep dying at the same moments they always died.

The fix is not complicated. It is a practice cadence, a skill measurement layer, and a coaching loop that gives managers the signal they need to coach specifically rather than generally. If your team wants to build that, SecondBody has a tier you can start with today. Or book a demo and see what a Monday morning briefing from Rory looks like for your manager — what it looks like when the coaching priority list is already triaged before the week starts. Either way, next quarter is going to happen. The Execution Gap will either be closing or staying open. Make sure you know which one it is.

Good luck out there.

Building a sales enablement program? Reps don't need more content. They need reps. Use AI roleplay to turn knowledge into instinct before the next deal pressure-tests it.

Related Solutions