AI Sales Roleplay Training: The Complete Guide for Sales Leaders Choosing Between AI and Human Practice in 2026

AI sales roleplay training, without the marketing fog. Voice AI vs human practice, AI sales coaching ROI, and what actually builds rep reflexes.

Posted by

Secondbody.ai

Published by

Last Updated

Here's something worth admitting before we go any further. SecondBody makes AI sales roleplay training software. We sell it. Rory, our AI coach, runs voice-first roleplays for cold calls, discovery, objection handling, and closing. So when you read a 7,000-word sales roleplay tool comparison on our own website — covering AI roleplay, human roleplay, and where AI sales coaching actually fits — you should assume bias and read everything below with that context.

We're going to try to earn your trust the same way we always do — by being harder on our own category than anyone else in it. AI sales roleplay training is not magic. It does not replace good managers. It does not work if you bolt it on top of a broken sales process and expect ramp time to drop. We've watched enough rollouts to see where it works, and where it gets installed, ignored, and quietly cancelled six months later.

Most articles on this topic are written by vendors making the maximalist case. "Throw out human roleplay. AI does it better." That's not the honest answer. The honest answer is that human roleplay had a real job and AI roleplay does that job better in some specific ways and worse in a few others. We'll cover both.

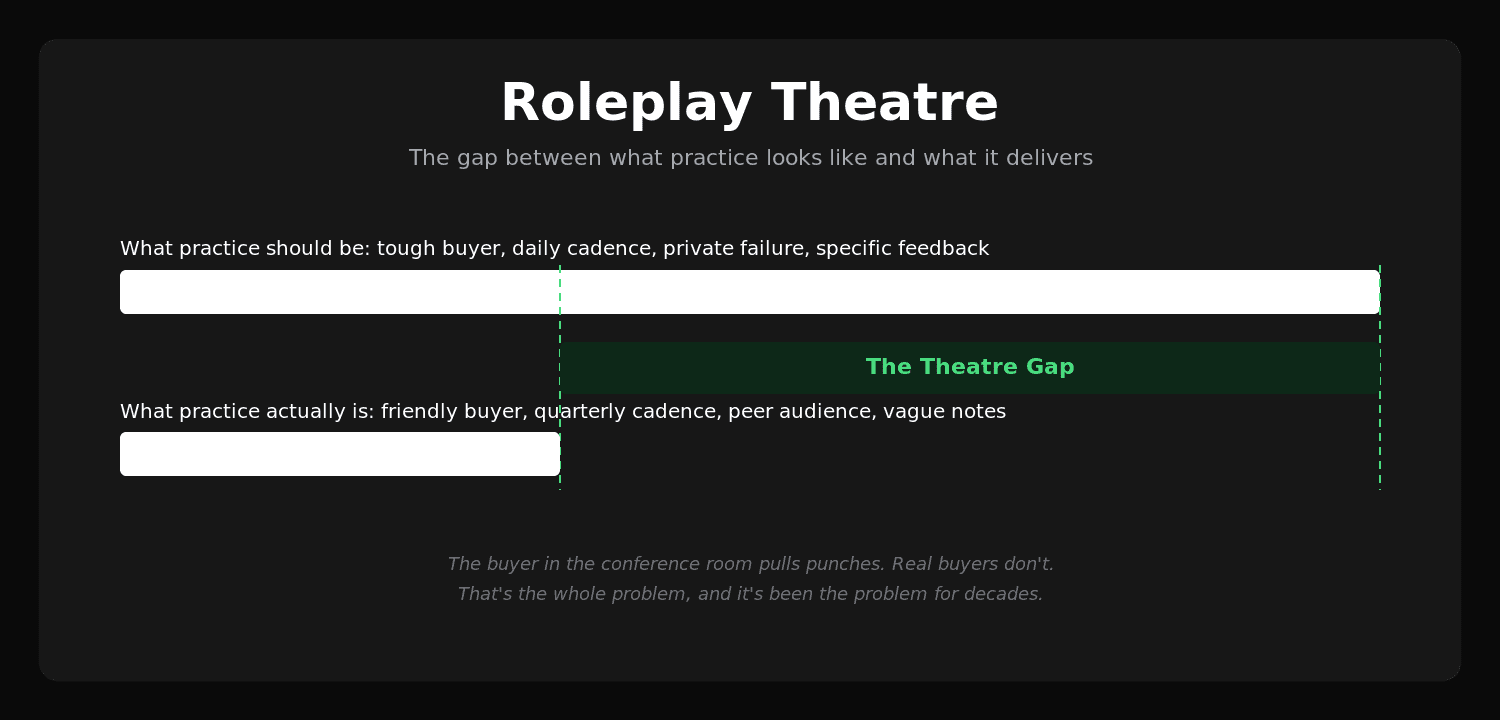

The thing nobody says out loud is this: most sales training programs run something they call "roleplay" that is actually a different activity entirely. We're going to give it a name in this piece — Roleplay Theatre — and use it as a frame for the whole article. If your team's roleplay sessions are Roleplay Theatre, swapping in a better tool won't fix anything. The format itself is the problem.

What is AI sales roleplay training?

AI sales roleplay training is structured practice where a sales rep has a live, voice-based conversation with an AI buyer persona, gets scored on the response, and tries again. The AI plays the role of a prospect, customer, or stakeholder — a skeptical CFO, a hostile procurement officer, a champion who's gone cold, a price-shopping inbound lead — and pushes back the way a real buyer would. The rep talks. The AI talks back. After the conversation, an AI coach reviews what happened and surfaces what to fix.

That's the clean definition. Here's what it actually means in practice.

A new BDR at a Series B SaaS company gets assigned an AI roleplay before her first day of live calls. The scenario is a cold outbound to a VP of Engineering at a mid-market company. She has 90 seconds to earn a discovery slot. She opens with the script she rehearsed. The AI buyer interrupts at second 14 with "I'm in a meeting in three minutes — what is this?" She panics, drops the script, says something about "exploring observability solutions." The AI buyer says "we already use Datadog, send me an email," and the call ends. She gets a score, a transcript with timestamps, and three specific things to try differently. She runs the same scenario four more times that morning. By the fourth attempt, she handles the interruption cleanly.

That's a useful AI sales roleplay training session. The buyer didn't pull punches. The rep failed in private and recovered. The reps next to her at the office didn't see her stumble. The whole loop took twenty minutes.

A bad AI sales roleplay session looks like a chatbot reading a buyer-persona prompt with no inflection, accepting any answer the rep gives, and producing generic "good job, try to ask more questions" feedback. The category is wide. Quality varies a lot. We'll cover what to look for later in this piece.

The reason AI sales roleplay training exists as a category is that human roleplay — the workshop kind, the manager-and-rep-in-a-conference-room kind — has a structural problem we'll get into next. The instinct behind human roleplay is correct. The execution has always been the issue.

For a more general overview of the broader category, we wrote a longer piece on what AI sales training is.

Why does most human roleplay fail?

The phrase we'll use throughout this piece is Roleplay Theatre. Roleplay Theatre is what happens when an organization runs a session that looks like roleplay, feels like roleplay, gets logged in the LMS as roleplay — and produces none of the things roleplay is supposed to produce. It's training that performs the appearance of practice without delivering practice.

Here's how it shows up.

The buyer is friendly

When a colleague or a manager plays the buyer in a roleplay, they pull punches. They don't want to be the person who made a teammate look bad in front of the room. They accept weak answers. They ask the obvious follow-ups but not the brutal ones. They go off-script the moment the rep struggles, in a way that feels generous in the moment and is catastrophic for the rep's preparation.

A real buyer is not generous. A real buyer is busy, distracted, sometimes annoyed that you called, sometimes interested for reasons you didn't anticipate, and almost always thinking about their own day rather than your demo. The fake buyer in the conference room is none of those things. The simulation doesn't simulate.

The audience is wrong

In real sales, the pressure comes from the buyer. The rep is anxious because the deal might slip, the quota might miss, the prospect might say no. In Roleplay Theatre, the pressure comes from the colleagues watching. The rep is anxious because they might look stupid in front of the team. These are completely different kinds of pressure and they activate different parts of the rep's brain.

We wrote about this in a longer piece on the psychology of AI roleplay — the social-evaluation pressure of a peer audience does not transfer to the high-stakes-buyer pressure of a real call. You're not training the right reflexes. You're training the rep to perform under observation, which they've already been doing since fifth grade.

The cadence is broken

The honest count of how often a typical sales rep does roleplay in a normal year, outside of formal onboarding, is somewhere between two and six times. That's it. Two to six attempts per year at the most economically important skill in the job. A junior pianist would not get good with that cadence. A junior surgeon would not get good with that cadence. A junior anything would not.

The cadence is broken because human roleplay requires a manager's calendar, a quiet room, sometimes an audience, and forty-five minutes of dedicated time. Those are expensive ingredients. They get rationed. Practice gets rationed. Rationed practice is theater.

The feedback decays

Even when human roleplay is run well — a tough buyer, a small audience, a thoughtful manager — the feedback at the end is verbal, generic, and forgotten by Tuesday. "You should ask more discovery questions before you pitch." Sure. Which questions? At what point in the conversation? With what phrasing? Tied to which moment in this specific call I just did? None of that survives the drive home.

This is the part of Roleplay Theatre that's hardest to fix with effort alone. Memory decay is just memory decay. By the time the rep is on a real call where they could apply the lesson, the lesson has faded into "be more curious."

The coaching capacity isn't there

Roleplay Theatre persists partly because the alternative — high-volume, high-quality, individualized roleplay — would require coaching capacity most organizations don't have. A manager with eight reps cannot run weekly two-on-one roleplays with each of them and still do their actual job. So roleplay gets cut to quarterly. Then to onboarding only. Then to "we'll do roleplay if there's time at the team offsite."

This isn't a moral failing of managers. It's an arithmetic problem. Every minute spent on roleplay is a minute not spent on a forecast call, a deal review, a customer escalation, a hire. The math doesn't work and never has.

We wrote about this dynamic in a piece on why sales training fails under real pressure.

So when sales leaders ask "should we do more human roleplay" — the question already contains the failure mode. The answer is no, not because human roleplay is useless, but because the version that scales in your org will be Roleplay Theatre and Roleplay Theatre is a tax, not a benefit.

What does AI sales roleplay training actually do differently?

Now the harder question. What changes when you swap human roleplay for AI roleplay?

The buyer doesn't pull punches

An AI buyer persona can be calibrated to the exact difficulty level your reps actually face in the wild. A skeptical CFO who pushes back on every ROI claim. A gatekeeper who's heard every opener and is bored. A champion who's gone cold and is dodging your calls. A procurement officer demanding a 30 percent discount with no business case attached.

The AI doesn't know the rep socially. It doesn't care if the rep looks bad. It doesn't soften the question because the rep just had a tough quarter. It pushes back the way a real buyer pushes back, which is the only kind of pushback worth practicing against.

This sounds like a small thing. It's actually the structural fix. The single biggest reason Roleplay Theatre is theater is that the buyer is friendly. Replace the friendly buyer with an AI that doesn't care about social dynamics, and the practice becomes practice.

The cadence becomes daily

AI sales roleplay training doesn't require a manager's calendar. It runs at 7am before a big call, at lunch after a tough rejection, on a Sunday night when the rep can't sleep about Monday's pitch. Practice happens when it's most useful, which is almost never the second Tuesday of the quarter.

The honest framing is this: if you want a rep to get good at handling the "we're happy with our current vendor" objection, they need to handle it 50 times under pressure, not twice. AI roleplay is the only mechanism we've seen that makes 50 reps achievable inside a normal sales week. Twice is theater. Fifty is practice.

The privacy unlocks honest failure

This is the underrated benefit. Newer reps would often rather give a wrong answer than look foolish in front of their manager. So they fall back on memorized scripts in human roleplay. They don't experiment. They don't try the risky line. They stick to what they're sure won't get them mocked.

In a private AI roleplay, there's no audience. They can fail. They can try the bold close, the unconventional question, the slightly weird empathy line they've been wondering about. They can fail eight times and learn what each failure mode feels like. The next morning, when a real buyer says something unexpected, the rep has eight failed experiments to draw on rather than two carefully memorized scripts.

We've watched this pattern enough times to be confident in it. Reps in private AI practice take more risks, learn faster, and arrive at their first real call with a wider range of recoverable mistakes already encoded.

The feedback is immediate and specific

After every roleplay, an AI coach reviews the actual transcript and surfaces what landed, what didn't, and what to try next. Not "be more curious." Specific things. "At 2:17 you pivoted to demo mode after the prospect said 'tell me more about how you handle X' — that was a buying signal you skipped past. Try asking what specifically prompted the question." Then the rep can run the same scenario again with that one fix in mind.

This is how skills compound. You don't get better at sales by hearing generic feedback after a quarterly workshop. You get better by getting one specific correction, applying it in a fresh attempt twenty minutes later, getting another correction, applying that. The compounding only works when the feedback loop is short. AI sales roleplay training closes the loop.

The data shows up

Every roleplay produces a transcript, a score, and a set of categorized observations. After eight weeks, a manager can see that Rep A is great at discovery and weak at procurement objections. Rep B handles cold calls fluently but freezes when buyers ask about pricing. Rep C scores well on individual moments but loses the thread on multi-stakeholder conversations.

This data didn't exist before. Managers used to coach from gut feel and one-off observations. With AI sales roleplay, the gut feel is replaced by patterns visible across hundreds of practice attempts. That's a different conversation in a 1:1.

That's the part SecondBody specifically built around — the AI coach Rory produces a manager briefing every Monday morning that says "this week, focus your 1:1 with Maya on objection handling, here are the three transcripts where she froze." One manager can coach 20-30 reps that way, which is the only honest path to making coaching scale.

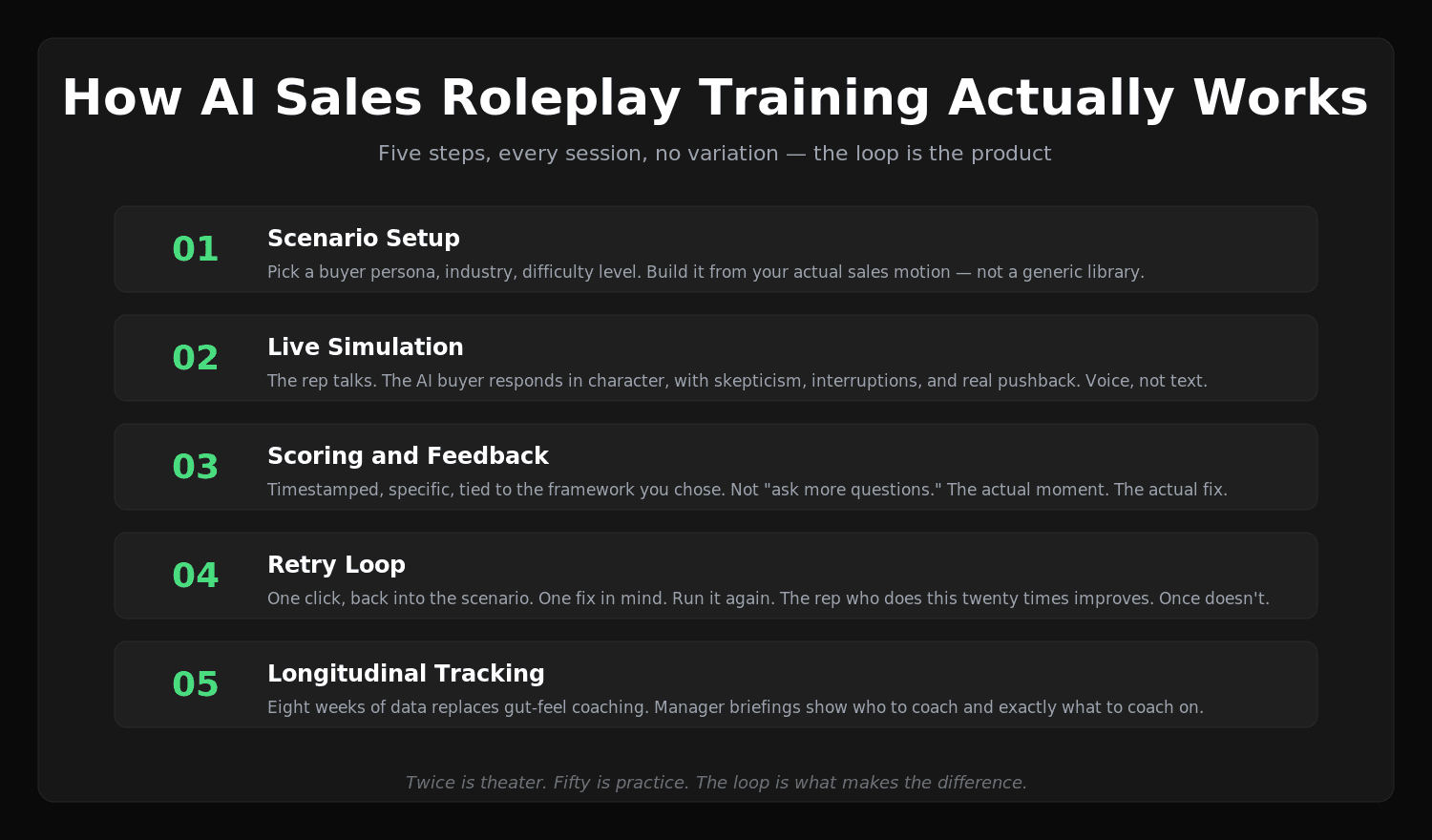

How does AI sales roleplay training actually work?

Most platforms follow a similar five-step loop. The differences are in execution, but the shape is consistent.

Step 1: Scenario setup

The rep — or their manager — picks a scenario. A cold call to a specific buyer persona. A discovery call with a particular industry. An objection handling drill on a specific objection. A negotiation with a procurement committee. The scenario carries metadata: industry, company size, buyer role, methodology to apply, difficulty level.

The good platforms let you build scenarios from your actual sales motion — your ICP, your sales stages, your real objections. The bad platforms ship a generic library that has nothing to do with your specific buyers. If you sell into healthcare CIOs, generic "tech buyer" practice is barely better than no practice. Specificity matters.

Step 2: Live simulation

The rep starts the call. The AI plays the buyer. They talk for somewhere between 90 seconds and twelve minutes depending on the scenario. The AI responds in real time, in character, with the personality and skepticism the scenario specified. The rep uses voice. There's pressure. The AI doesn't pull punches.

The voice-first part matters more than text-first AI training tools want you to believe. Sales is a voice job. The reps' brains process verbal pressure differently from text pressure. Practice that doesn't simulate the medium doesn't simulate the job.

Step 3: Scoring and feedback

The AI scores the conversation against a rubric. Good platforms let you pick the rubric — MEDDIC, SPIN, Sandler, your custom framework. The score isn't a number on a vibes scale. It's broken down by phase: opening, discovery, value articulation, objection handling, close. The rep sees where they scored well and where they didn't.

The feedback is specific. Timestamped. Tied to actual moments in the transcript. "At 4:32 you let the buyer reframe the conversation around price before you'd anchored value — the recovery options at that point were limited. Earlier in the call would have been the move." That kind of feedback. Not "you could improve your discovery questions."

Step 4: Retry loop

This is the part most people skip when they describe AI roleplay. The point of AI roleplay isn't a single attempt. It's twenty attempts. The rep runs the same scenario again with one fix in mind. Then another. They watch their score climb across attempts. They feel themselves getting better. The reps who do this are the ones who improve. The reps who do one attempt and move on are running Roleplay Theatre with extra steps.

The good platforms make the retry loop frictionless. One click and you're back in the scenario. The bad platforms bury the retry behind menus and friction. Friction kills practice. Practice that's frictionless gets done.

Step 5: Longitudinal tracking

After eight weeks, the platform shows the rep — and the manager — how they've progressed. Score trends per skill. Time spent. Scenarios attempted. Patterns visible across the data. The manager pulls this report into the weekly 1:1 and uses it to direct the next week's practice. Which is how a manager with 20 reps actually coaches all 20 of them, instead of pretending to.

The five-step loop is the standard pattern. If a platform you're evaluating doesn't have all five clearly, that's a tell. We've seen platforms that are great at simulation and terrible at scoring. Great at scoring and bad at retry. The whole loop has to work or none of it does.

For more on the practical mechanics, we wrote a separate piece on fixing tech overload in sales role-play.

What separates good AI sales roleplay training from theater?

Not all AI roleplay clears the bar. Here's what separates useful practice from a chatbot wearing a buyer-persona hat.

Scenario specificity

Generic "practice selling" prompts produce generic results. Effective AI sales roleplay is built around the actual conversations your reps have. Specific buyer personas tied to your ICP. Specific objections your reps actually hear. Specific pricing structures, competitive dynamics, regulatory constraints, industry vocabulary. If a scenario could be running for a different company without any change, it's not specific enough.

The platforms that let you build custom scenarios in 15 minutes are the ones that get used. The platforms that require professional services to build a scenario are the ones that get installed and never touched.

Pressure calibration

A roleplay where the buyer accepts every answer is theater. A roleplay where the buyer rejects every answer is also theater — it's just a different kind of demoralizing. Good platforms let you set difficulty across a spectrum. A warm inbound lead. A skeptical mid-market evaluator. A hostile procurement committee. Reps practice across the range, not just on the easy or the impossibly hard.

Methodology alignment

If your team uses MEDDIC, the AI feedback should evaluate responses against MEDDIC criteria. If you use SPIN, the questions should be scored as Situation/Problem/Implication/Need-payoff. If you have a homegrown methodology, the platform should learn it. Generic "good communication" feedback isn't enough.

We wrote a piece on MEDDIC implementation with AI roleplay that goes deeper on this.

Conversation continuity

The best AI roleplay runs full conversations, not isolated objection responses. Reps need to practice the arc of a call — the open, the discovery, the pivot, the close — not just individual moments stitched together. A platform that only does single-turn objection drills is solving 20 percent of the problem.

Voice, not text

This is non-negotiable in our view. Sales is a voice job. Practicing in chat is practicing for a different job. Some platforms started as text-based and bolted on voice as an afterthought. You can tell — the voice feels stilted, the latency is wrong, the prosody is flat. Voice-first platforms feel like a real call. Voice-bolted-on platforms feel like reading a transcript out loud.

Manager-side workflow

Practice that doesn't surface to managers is practice that doesn't compound into team improvement. The best platforms produce manager-side reports that turn raw practice data into "here's who to coach this week, here's what to coach on." Without that, you've automated the rep side of practice and left the manager side broken. Half a fix is sometimes worse than no fix because it generates the appearance of progress without the actual progress.

Most of these are the things that distinguish AI sales roleplay training from AI sales roleplay theater. The difference is structural, not marketing.

Who is AI sales roleplay training for?

Not every team needs the same level of investment. Honest segmentation:

New BDRs and SDRs

The clearest fit. New BDRs need volume of practice on a small set of high-frequency scenarios — cold opens, gatekeeper objections, qualifying questions, common pricing pushback. The cadence is daily for the first 90 days. The retry loop is the whole product. AI sales roleplay training collapses ramp time for this group more than any other.

The reps for this segment are early-career, often nervous, often working from home and isolated from peer learning. The privacy of AI practice is doubly valuable — they can fail without their cohort watching.

For more on cold call practice specifically, we wrote about cold calling use cases.

Mid-market AEs in a methodology-driven sale

Teams running MEDDIC, SPIN, or Sandler can use AI roleplay to drill the methodology into reflexive use. The AE knows the framework intellectually after onboarding. The question is whether they apply it under pressure on a deal that's slipping. That gap is closed by repetition under simulated pressure, which is what AI roleplay supplies.

Field sales reps

Field sales is hard to coach because the reps are physically distributed. A regional manager can't sit in on every call. AI sales roleplay training gives field reps a way to practice on the road, between meetings, before walking into a hospital or a manufacturing plant or a regional bank. The mobile-first version of practice matters a lot for this segment.

Customer success and renewals teams

CS conversations often involve harder emotional dynamics than new-business sales. A customer who's churned out emotionally and is one bad QBR from leaving. A renewal where the buyer wants a 40 percent discount. AI roleplay can simulate these dynamics in ways that human roleplay can't, because the AI doesn't get fatigued by being mean.

Newly promoted managers

Often overlooked. New first-line sales managers have a coaching skill gap that no one fills. They were great reps and now have to coach reps. AI roleplay can run the manager through difficult coaching conversations — the underperforming rep, the team member with attitude problems, the call where you have to tell someone they're being put on a PIP. We've seen this used well. Most platforms don't market it.

Where AI roleplay is a worse fit

We promised honesty. AI roleplay is a worse fit for high-touch, multi-stakeholder, six-month enterprise sales where most of the practice value comes from internal alignment and account orchestration rather than individual conversation reps. The ten-minute conversation isn't where the deal is won. The orchestration of fifteen stakeholders across nine months is. AI roleplay can help individual moments inside that arc but can't simulate the whole motion.

It's also not a great fit if your sales process is so unstable that reps can't tell you what scenarios they actually need to practice. Fix the sales process first. Then practice it.

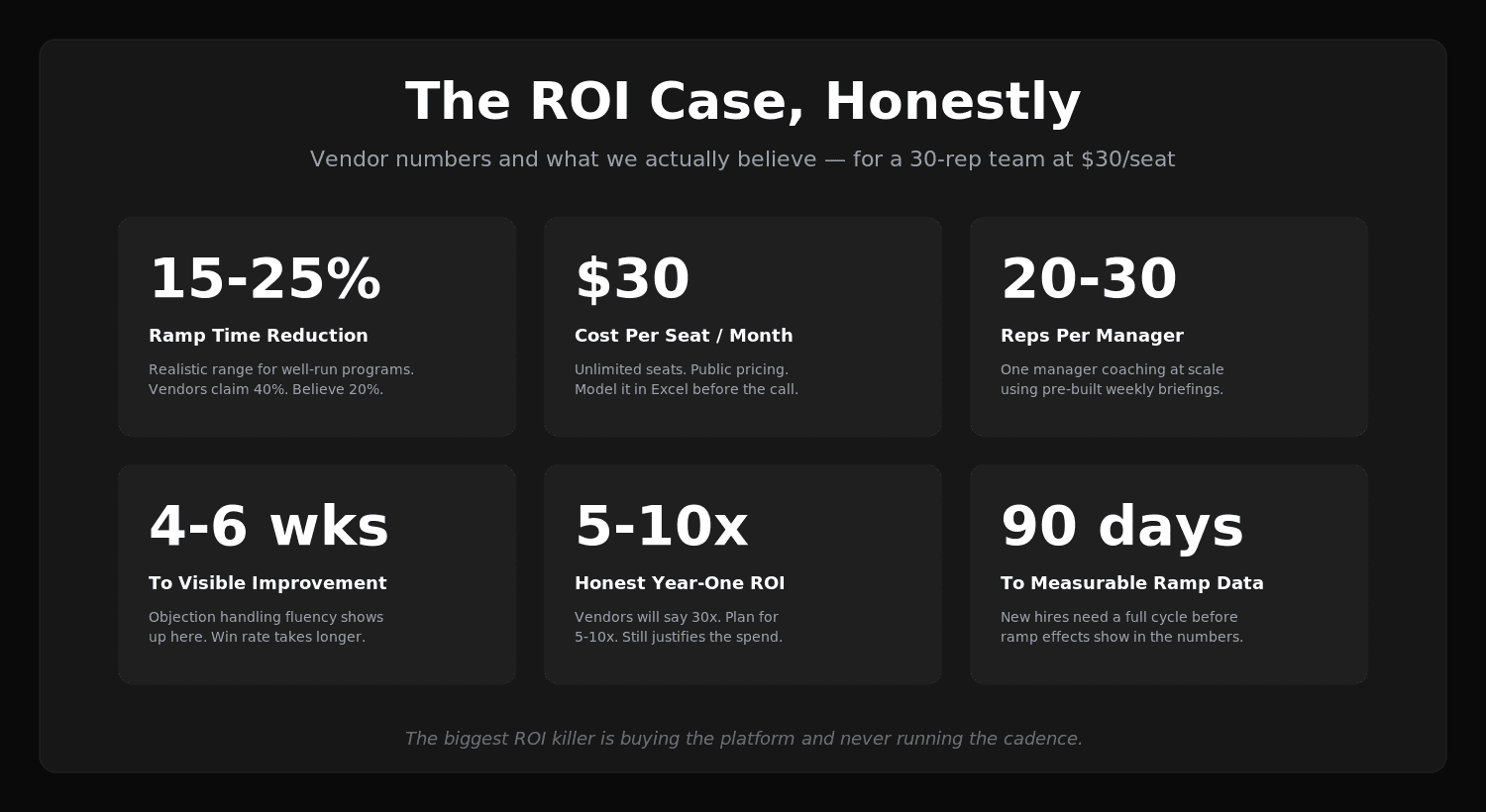

What are the benefits, with honest numbers?

Vendor benefit pages are full of confident percentages. We're going to give you the optimistic case and the skeptical case for each.

Faster ramp time for new hires

The optimistic case (vendor-sourced): new BDRs reach quota two months faster with AI roleplay. We've seen claims as high as 40 percent ramp reductions.

The skeptical case (what we actually believe): the honest number is somewhere between 15 and 25 percent ramp time reduction in well-run programs. The 40 percent claims usually come from cherry-picked cohorts or are measured against unusually slow baselines. A 20 percent ramp reduction is still huge — for a team hiring 20 BDRs a year at a $90k OTE, that's worth somewhere around $360k in earlier-quota-attainment value. You don't need 40 to justify the spend.

We wrote more about this in our piece on accelerating sales onboarding.

Higher win rates

The optimistic case: vendors will quote 10-20 percent win rate improvements. Some claim 30+.

The skeptical case: win rate is too noisy a metric for short-term measurement and is influenced by too many things outside of training to attribute cleanly. We believe the directional effect is real, but anyone quoting a clean win-rate delta from training in the first six months is selling something. Look at it over 12 months and at the cohort level (new BDRs hired with AI practice vs. without) rather than as a top-line team number.

Better objection handling under pressure

The optimistic case: reps handle objections measurably better after consistent practice. Specific objections like "we're happy with our current vendor" or "send me an email" go from freeze responses to fluent recoveries.

The skeptical case: this one we're confident in, because it's directly observable in transcripts. The qualitative improvement is large and visible within four to six weeks of consistent daily practice. The harder question is whether it converts to revenue — see win rate above for the caveat.

Reduced manager coaching load

The optimistic case: AI roleplay frees managers from running roleplay sessions, saving them five-plus hours a week.

The skeptical case: the time saved is real, but the more important effect is qualitative. Managers go from coaching by gut to coaching from data. The 1:1 conversation becomes "here are the three transcripts where you struggled with discovery, let's pick one" instead of "how's the pipeline." That's the change that matters. Time savings are a side effect.

Higher rep confidence and retention

The optimistic case: reps who feel prepared for hard conversations are more confident, perform better, and stay longer.

The skeptical case: this is real but slow to show up in numbers. Retention effects take 6-12 months to be visible. Confidence effects show up in week three. Both are worth caring about. Neither is something you can put a clean dollar number on in quarter one.

For a deeper look, we wrote about the five key metrics to measure sales training ROI in 2026.

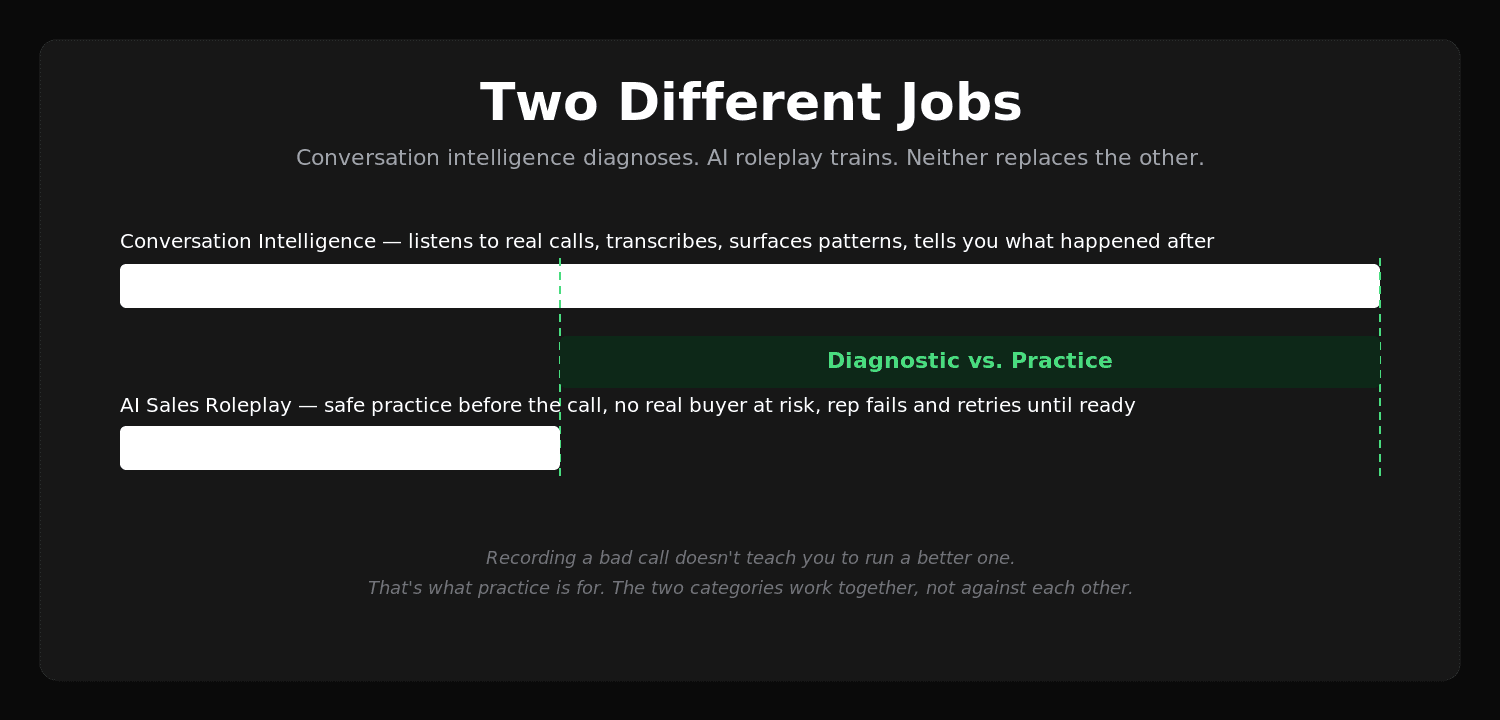

How is AI sales roleplay training different from conversation intelligence?

This confuses buyers a lot, so it's worth being precise. Both categories use AI on sales conversations. They do different jobs.

Conversation intelligence — Gong, Chorus, Avoma, the call-recording-and-analysis category — listens to real calls reps already had. It transcribes, scores, surfaces patterns, helps managers spot issues. It's diagnostic. It tells you what happened. It does not give the rep practice. The rep can't get better at a hard conversation by reviewing a recording of one they already lost.

AI sales roleplay training is the opposite. It's practice before the call, not analysis after. The rep talks to a simulated buyer, fails safely, retries, and arrives at the real call already prepared. There's no real customer involved. Nobody is risking a real deal. The rep practices on disposable pretend conversations until they're ready for real ones.

The two categories are complementary, not competitive. The best teams use both. Conversation intelligence on real calls to find the gaps. AI sales roleplay to drill the gaps closed before the next batch of calls. We wrote about conversation intelligence as a category separately if you want the longer version.

The mistake we see is teams buying conversation intelligence and assuming it'll fix skill gaps. It doesn't. It surfaces them. That's a different job. The rep still has to practice the fix, and conversation intelligence has no practice mechanism.

What ROI can you expect from AI sales roleplay training?

The honest framing is that ROI on training is harder to measure than ROI on most software. The thing being measured — rep performance — is influenced by territory, lead quality, comp plan, manager skill, product changes, market conditions. Pulling out the training contribution is real work.

Here's how we'd model it for a 30-rep team.

The optimistic case (vendor-sourced): a $30/seat platform at 30 seats is $900/month, $10,800/year. If it produces a 25 percent ramp reduction on 10 new hires per year at $90k OTE, the ramp value is roughly $225k. Plus higher win rates of, say, 5 points on $5M of pipeline = $250k of attributed revenue. Plus retention benefits worth somewhere in the low-to-mid hundreds of thousands. Vendors will run the math up to a 20-30x ROI.

The skeptical case (what we actually believe): the ramp value is real but maybe two-thirds of the headline number. The win rate effect at five points is on the optimistic end and we'd plan for two to three. The retention effect is real but takes longer to show than the math implies. Net: a well-run AI roleplay program at $30/seat for 30 reps probably returns 5-10x in the first year, and more in years two and three as the practice cadence becomes habit. That's still a great return for a sales tool. It's just not 30x.

The way to make ROI come in higher is to actually use it. The biggest ROI killer in this category is buying the platform and never running the cadence — practice that doesn't happen has zero ROI. We've watched that movie before.

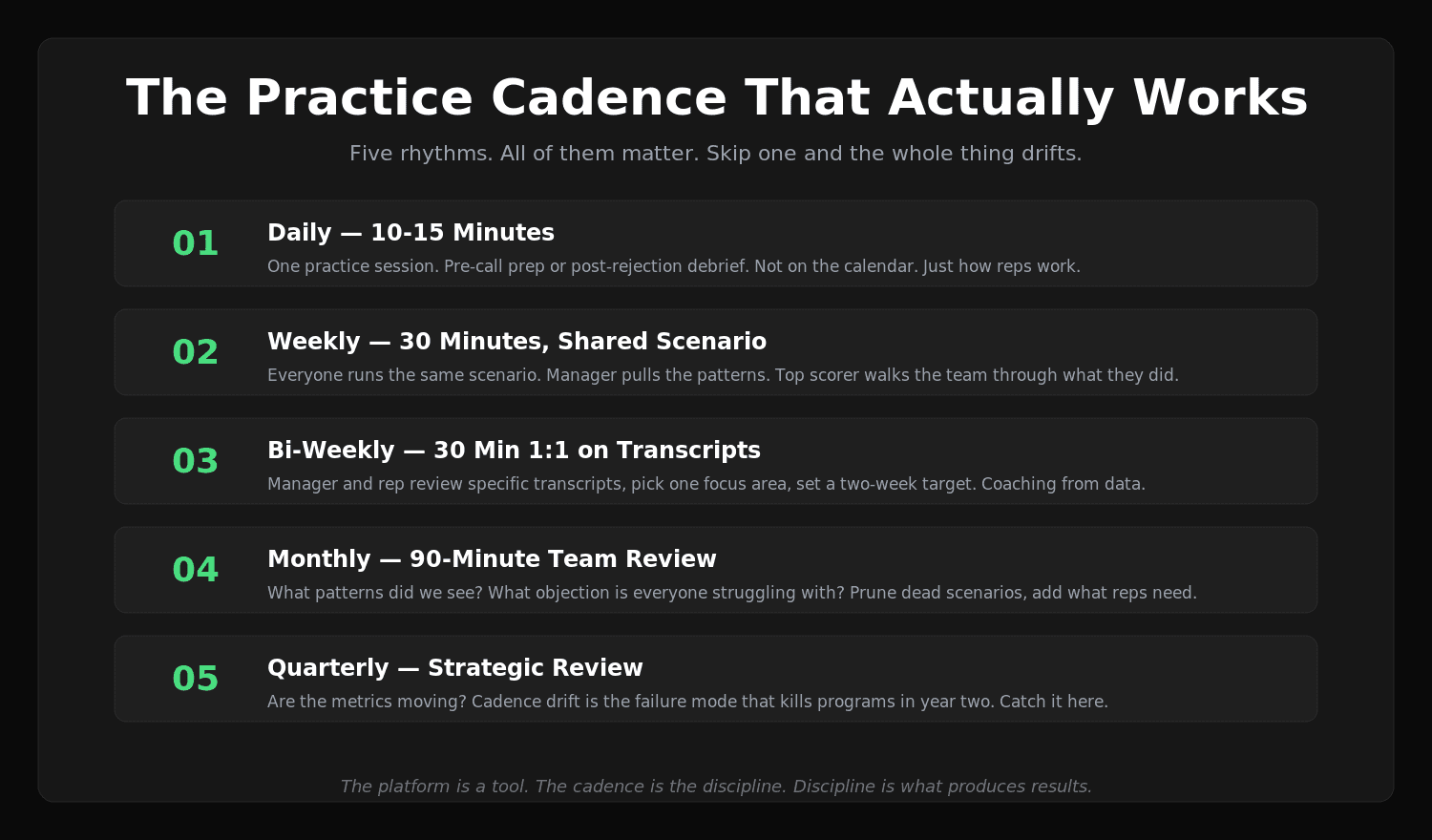

What does a practical AI sales roleplay training cadence look like?

The cadence is where most rollouts succeed or fail. Here's a workable one for a typical inside sales team:

Daily (10-15 min) — every rep runs at least one practice session. Pre-call prep before their hardest call of the day, or end-of-day reflection on the call that didn't go well. Frictionless. Not a meeting. Not on the calendar. Just part of how they work.

Weekly (30 min) — team-level practice on a shared scenario. Everyone runs the same objection or the same discovery scenario. Manager pulls the patterns into the team meeting. Rep with the highest score that week walks the team through what they did. Healthy social pressure, useful comparison, no public failure.

Bi-weekly (1:1, 30 min) — manager and rep review the rep's practice transcripts. Pick one area to focus on. Set a target for the next two weeks. The 1:1 stops being a forecast call and becomes a coaching conversation, because the data finally exists to support it.

Monthly (90 min) — team review. What patterns did we see? What objection is everyone struggling with? What scenario should we add to the library? This is the slot for adjusting the practice menu based on what's actually happening in the market.

Quarterly — strategic review. Are the metrics moving? Should we change methodology? Are there reps who aren't using the platform — why? Cadence drift is the failure mode that kills programs in year two. The quarterly review catches it.

The teams that hit those rhythms are the ones that get the ROI in the optimistic range. The teams that buy the platform and skip the cadence get the ROI in the disappointing range. The platform isn't magic. The cadence is.

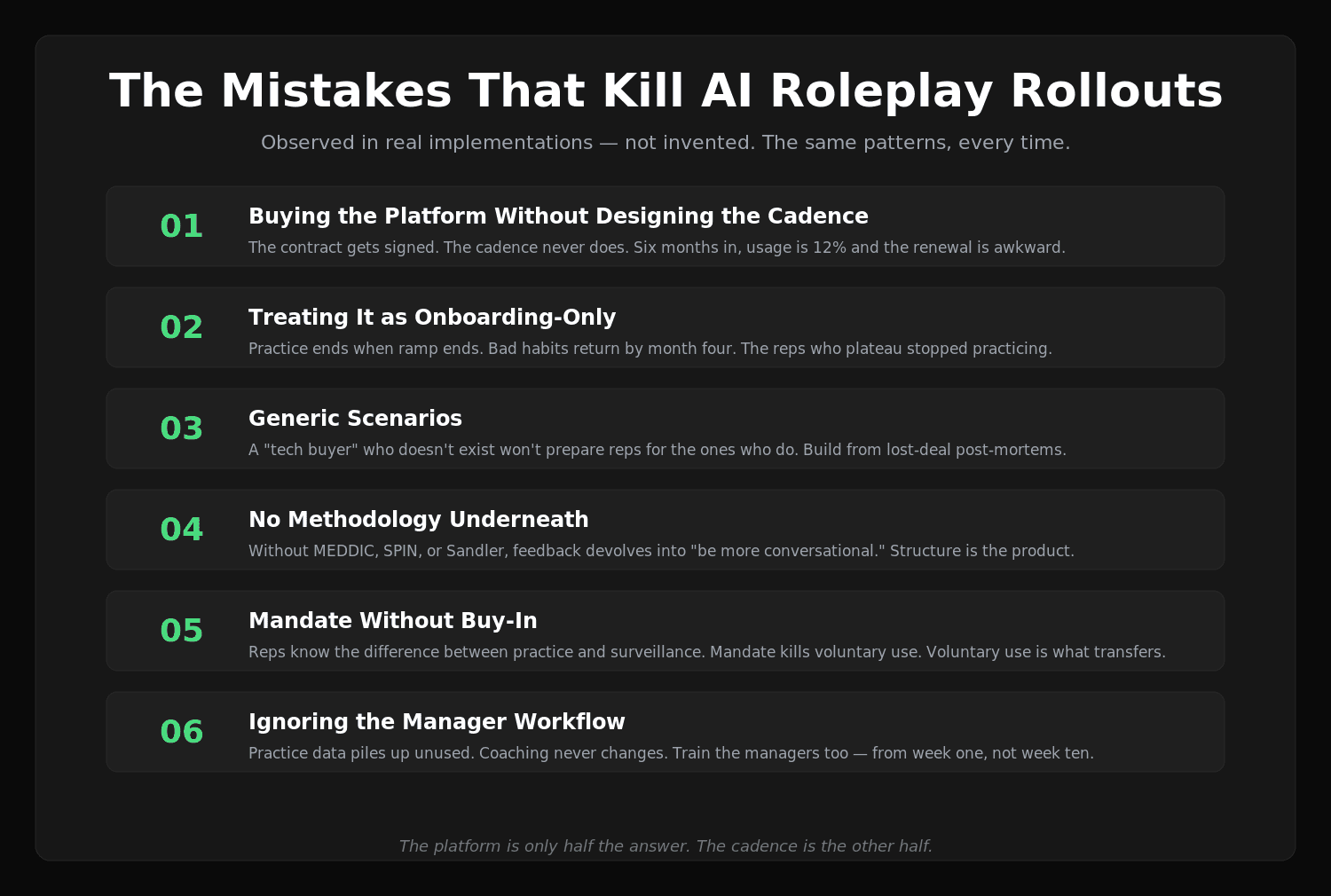

What are the common mistakes teams make with AI sales roleplay training?

We've watched enough rollouts to see the patterns.

1. Buying the platform without designing the cadence

The rollout fails the same way every time. Procurement is enthusiastic, the contract gets signed, the platform gets provisioned, and then nobody runs a cadence. Six months in, usage is at 12 percent and the contract goes up for review.

The fix: before you sign, write the cadence on a single page. Who practices when, who reviews what, what happens in the weekly 1:1, who's accountable. If you can't write it before you buy, you won't run it after you buy.

2. Treating it as onboarding-only

A lot of teams use AI sales roleplay training intensively for the first 90 days of a new hire's tenure and then drop it. The rep ramps, gets to live calls, and the practice cadence ends. Six months later they're back to whatever bad habits they brought from their last job.

Practice has to continue past onboarding. The reps who are good get good by practicing past the point where they need to. The reps who plateau plateau because they stopped.

3. Generic scenarios

If your scenario library is "cold call to a tech buyer," your reps will get good at practicing for a tech buyer who doesn't exist. Specificity matters. Build scenarios from your actual customer interviews, lost-deal post-mortems, and your top reps' war stories. The closer the scenario is to your reality, the more transfer you get.

4. No methodology

Without a sales methodology underneath, AI roleplay devolves into "be more conversational." Good practice has structure. Pick a methodology — MEDDIC, SPIN, Sandler, your homegrown framework — and configure the AI to score against it. The structure is what turns reps from "talking" to "selling."

5. Mandate without buy-in

Reps know the difference between a tool that helps them hit quota and a tool that managers use to surveil them. If the rollout feels like surveillance — "every rep must complete X practice sessions per week or it goes in the report" — adoption tanks. Reps will complete the minimum, in low-effort attempts, just to clear the count.

The fix: position practice as a benefit to the rep, not a compliance mechanism. The reps who use it most should be the high-performers who treat it as preparation. Mandate kills voluntary use, which is the kind that actually transfers.

6. Ignoring the manager workflow

If you roll out AI roleplay only on the rep side and managers don't change how they coach, the practice data piles up unused. The data is the input to better 1:1s. If managers don't read it, the practice doesn't compound into team improvement. Train the managers on the platform too. Build the manager workflow into the cadence from week one.

7. Measuring the wrong things

Some teams measure success by practice volume. "Reps did 1,200 sessions this quarter." That's an input metric. The output metric is whether reps got better — measured by win rate, ramp time, objection handling scores in real calls. Measure outputs. Inputs are easy to game and not what you actually care about.

We wrote a longer piece on why sales training fails under real pressure that gets into more of these.

How to implement AI sales roleplay training: a step-by-step guide

If you're starting from zero, here's the rollout we'd run.

Week 1-2: Document the practice you actually need

Before evaluating any platform, get clear on what your reps need to practice. Pull lost-deal post-mortems from the last quarter. Sit in on three to five reps' calls. Talk to your top performer about the moments where deals are won or lost. Write down the 10 scenarios that matter most. Don't skip this. The vendors will offer to do it for you. That's how you end up with generic scenarios. Do it yourself.

Week 2-4: Evaluate two or three platforms

Don't run a 17-vendor RFP. The category isn't that big and the meaningful differences are visible quickly. Pick two or three platforms that look credible. Run a real practice session on each — the demo where you, the buyer, are the rep. Notice the voice quality, the pushback strength, the feedback specificity, the retry friction. Notice whether the manager workflow is real or marketing.

Week 4: Pilot with a focused group

Don't roll out to the whole team on day one. Pick a focused pilot — five to ten reps, mix of new and experienced, mix of segments. Run it for six weeks. Track usage, qualitative feedback, and any leading indicators (objection handling fluency, confidence in calls, manager 1:1 quality). The pilot data tells you whether to expand or kill.

Week 10: Expand and codify

If the pilot worked, write the cadence on one page. Who practices when. What scenarios are required vs. optional. What manager 1:1s look like. What the weekly team meeting includes. Roll out to the whole team with the cadence already designed.

Week 14+: Iterate

Every month, prune the scenarios that aren't getting practiced and add the ones reps are asking for. Every quarter, look at the metrics — ramp time, win rate, manager satisfaction with their own coaching — and adjust. The platform is a tool. The cadence is the discipline. The discipline is what produces results.

We have a longer piece on training reps practically as a manager that goes deeper on the manager-side workflow.

Frequently Asked Questions

What's the difference between AI sales roleplay training and a chatbot?

A chatbot answers questions. AI sales roleplay training simulates a sales conversation with a buyer who has personality, objectives, skepticism, and pushback. The rep talks. The AI buyer talks back in character. The conversation has structure — opening, discovery, value, objections, close. After the conversation, the AI scores it and surfaces specific things to fix. A chatbot has none of that structure. The two are different categories.

Can AI sales roleplay replace human roleplay entirely?

It can replace most of what Roleplay Theatre was supposed to do, which is the ~80 percent of value. The remaining 20 percent — multi-stakeholder dynamics, internal alignment practice, complex enterprise account orchestration — still benefits from human roleplay run well. The honest answer is that AI roleplay handles the high-frequency, individual-conversation practice better, and human roleplay handles the rare, complex, multi-party scenarios better. Most teams need a lot of the first and a little of the second.

How is voice-first roleplay different from text-based AI training?

Sales is a voice job. Text-based AI training tools have the rep type into a chat box, which means they're practicing for a different medium than the one they actually sell in. Voice-first means the rep talks out loud, hears their own pace and tone, gets interrupted, has to think on their feet. The cognitive demand is different. The practice transfers. Text-based practice doesn't transfer the same way. We'd consider voice-first non-negotiable for any team where the actual job is voice calls.

How long before reps see results from AI sales roleplay training?

Confidence and fluency improvements show up in weeks two and three. Specific objection handling gets visibly better in weeks four to six. Ramp time effects on new hires show up at the 90-day mark. Win rate effects, if you're going to see them, take six to twelve months and require enough deal volume to be statistically meaningful. Anyone promising a clean win-rate lift in month one is selling something.

Do reps actually use AI sales roleplay training, or does it sit idle?

Both, depending on the rollout. Mandate-driven rollouts get low-effort compliance use. Cadence-driven rollouts where the reps see practice as a competitive advantage get real engagement. The platform is only half the answer. The cadence is the other half. We've watched the same platform succeed in one team and fail in another based on rollout quality, not the platform itself.

Can AI roleplay handle our specific industry vocabulary?

The good platforms can be configured with your industry's terminology, regulatory constraints, buyer personas, and competitive dynamics. The bad platforms ship a generic library. The configuration question is one of the things you should test in any evaluation. Run a real-world scenario from your sales motion and see how the AI handles it. If it sounds generic, the platform isn't ready for you.

How does AI roleplay handle methodology like MEDDIC or SPIN?

The good platforms score against your chosen methodology. MEDDIC, SPIN, Sandler, BANT — the AI feedback is structured around the framework. So a discovery call gets evaluated for whether the rep identified Metrics, Economic Buyer, Decision Criteria, etc. The bad platforms give generic "good communication" feedback that ignores methodology. We'd consider methodology-native scoring a baseline requirement, not a nice-to-have.

Is AI sales roleplay training appropriate for senior AEs, or just BDRs?

Both, but differently. BDRs need volume of practice on a small set of scenarios. Senior AEs need targeted practice on rarer, higher-stakes scenarios — competitive bake-offs, late-stage objections, multi-stakeholder calls. The same platform can serve both if it's flexible. The fixed-library platforms work better for BDRs and worse for senior AEs.

Does SecondBody's Rory work for our methodology?

Rory is methodology-native — SPIN, MEDDIC, Sandler, BANT, and custom frameworks are configurable. The scoring rubrics are tied to the methodology you select. If you have a homegrown framework, we'll configure it during onboarding. The default scoring uses generic best practices, but methodology-native scoring is the recommended setup and it's where the practice value is highest.

How does SecondBody compare to other AI sales roleplay platforms?

We wrote a longer piece comparing 17 platforms, including ours, in the AI sales training software roundup. The short version: SecondBody is voice-first from day one rather than text-bolted-on, has unlimited seats at $30/user/month so practice isn't rationed, has public pricing you can model in Excel, and produces pre-1:1 manager briefings so coaching scales to 20-30 reps per manager. Customers include Cyera and others under NDA.

How do I run a sales roleplay tool comparison without getting marketing-spun?

The useful sales roleplay tool comparison is run in three steps, not seventeen. First, define the two or three scenarios your reps actually fail on — cold opens, a specific objection, a discovery moment — from your real call data. Second, run those exact scenarios on two or three platforms with the same rep, back to back. Notice voice quality, pushback strength, feedback specificity, retry friction. Third, ignore feature lists and bake-off marketing. The platform that wins is the one your rep voluntarily returns to on Tuesday morning without being asked. Anything else is theater dressed as evaluation.

What does it cost?

Public pricing on the SecondBody website. Pro tier is $30/user/month with unlimited seats and full feature access. There's a starter tier you can begin with today. We don't run free trials in the SaaS sense, but the demo path lets you run a real practice session with Rory and see if the practice quality matches what we've described.

Is SecondBody enterprise-ready?

SOC 2 compliant. Integrates with Aircall, Cloudtalk, Momentum.io, Fathom, and TeamTailor today, with Salesforce and HubSpot integrations shipping in 2026. Customers run from mid-market through enterprise. The pre-1:1 manager briefings are designed for sales orgs of 50+ reps where individual coaching doesn't scale.

Stop running Roleplay Theatre. Start practicing.

The thing we want you to take away from this 7,000-word piece is that the format of practice matters more than the choice of vendor. If your team is running Roleplay Theatre — friendly buyers, infrequent cadence, peer-pressure stakes, decayed feedback — switching vendors won't fix anything. The format itself is the problem. Roleplay Theatre is what happens when an organization performs the appearance of practice without delivering practice. It's been the default for decades because the alternative — high-volume, high-quality, individualized practice — required coaching capacity that didn't exist.

AI sales roleplay training is the first mechanism we've seen that makes the alternative real. Not because the technology is magic, but because it removes the bottleneck that turned roleplay into theater in the first place. The buyer is always available, always in character, never tired, never social. The cadence can be daily because nothing is scarce except the rep's willingness to practice. The feedback is immediate and specific. The manager-side data turns coaching into a real activity instead of a forecast call with a coaching label.

If your reps are going to spend the next year of their career having a thousand sales conversations, they should arrive at each one having already had a hundred similar ones in safety. That's not a moonshot. That's just practice, finally available at a cadence that matches the job.

If your team wants voice-first AI sales coaching and roleplay practice daily, with unlimited seats and pricing you can actually see, SecondBody has a tier you can start with today — or you can book a demo and run a real session with Rory yourself. Either way, the next step is to stop running theater and start running practice.

Good luck out there.

A buyer who doesn't pull punches. Feedback that doesn't decay. A cadence that doesn't need a manager's calendar.

SecondBody is the AI sales roleplay training platform built for the daily practice cadence: voice-first sessions with Rory, your AI coach, who plays the buyer, scores every call, and surfaces exactly what to fix — so reps stop running Roleplay Theatre and start building real reflexes. Explore cold call practice or Book a demo →